The Essential Guide to Kubernetes

In this article, we provide you with a guide to Kubernetes. Find out what it is, how it works, and why you should use it in certain situations.

Kubernetes is essentially an open-source, portable system for managing and distributing containers.

Why was this system created and how is it used in the modern world? Today we’ll answer these questions.

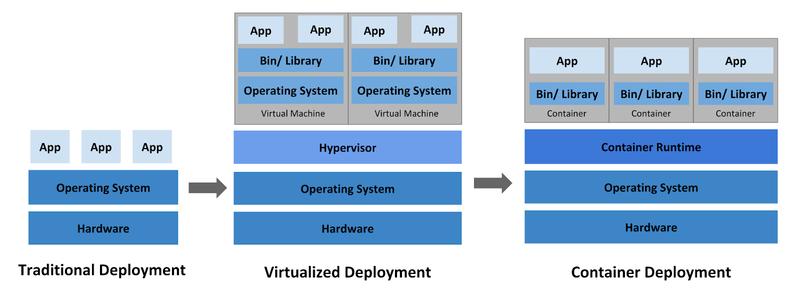

At the start of the internet era, applications were simply deployed and run in pure form on servers, essentially without the ability to determine the boundaries of the server resources they would consume. This was a big problem, as one installed application could use most of the server resources, resulting in other applications working much worse than users expected.

Hosting applications on different physical servers that pulled resources from each other offered a partial solution, but this was impossible to scale. With this setup, server resources were no longer fully utilized, and maintaining a bunch of servers that don’t use their resources to the fullest is rather expensive.

Next came the era of virtual deployment. Virtualization allows you to run multiple virtual machines on a single physical server, with applications being isolated from each other. This approach ensures a certain level of security, as the information in one application isn’t available to another application.

Each virtual machine is a full-fledged server running all its own components, including its own operating system, on top of virtualized equipment, the resources of which are allocated during initialization.

Containers are similar to virtual machines, but they have isolation properties that allow them to share an operating system between applications. Similar to a virtual machine, a container has its own file system, processor, RAM, and other resources. And since containers are not connected to the basic infrastructure of physical servers, they have the ability to move between clouds and various operating system distributions.

Advantages of containers:

- Flexible application creation and deployment. Creating a container image is simple and efficient compared to creating a virtual machine image.

- Continuous development, integration, and deployment. Containers provide for reliable and frequent builds and deployment with quick and easy rollback (due to the immutability of the image).

- Separation of tasks between Dev and Ops. You can create images of application containers during build / release and not during deployment, thereby separating application from infrastructure.

- Observability. Containers offer not only information and metrics at the operating system level but also information on the performance of applications and signals.

- Identical environments in development, testing, and release. Containers work the same on a laptop and in the cloud.

- Portability of the cloud and operating systems. Containers work on Ubuntu, RHEL, CoreOS, Google Kubernetes Engine, and anywhere else.

- Application-oriented management. Containers increase the level of abstraction by running the application on the operating system using logical resources.

- Distributed, flexible, dedicated microservices. Instead of a monolithic stack on one large dedicated machine, containerized applications are divided into smaller independent parts that can be dynamically deployed and managed.

- Resource isolation. Containers offer predictable application performance.

- Proper use of resources. Containers provide high efficiency and compactness.

Why do you need Kubernetes?

Containers, as practice has shown, are a great way to bundle and run applications, but they need to be managed. This is especially important in the production environment, when system downtime should be minimized.

At this point, Kubernetes comes to the rescue. It can deal with scaling and error handling in applications, it offers deployment templates, and do much more.

Key features of Kubernetes

Service monitoring and load balancing

Kubernetes can discover a container by its DNS name or its IP address. If traffic to the container is high, Kubernetes can balance the load and distribute network traffic so the deployment is stable.

Storage orchestration

Kubernetes allows you to automatically mount the storage system of your choice, such as local storage or a public cloud service.

Automated deployment and rollbacks

Using Kubernetes, you can describe the desired state of deployed containers and adopt that state. For example, you can automate Kubernetes to create new containers for deployment, delete existing containers, and distribute all their resources to a new container.

Automatic load balancing

You need to provide Kubernetes with a cluster of nodes that it can use to run container tasks. Then you need to specify the CPU cycles and memory (RAM) required for each container. Kubernetes can place containers on your sites to make the most of your resources.

Self-management

Kubernetes can restart failed containers, replace containers, and shut down containers that don’t pass a user-defined function test, not showing them to customers until they’re ready for service.

Confidential information and configuration management

Kubernetes can store and manage confidential information such as passwords, OAuth keys, and SSH tokens. You can deploy and update confidential information and application configurations without changing container images and without revealing sensitive information in the stack configuration.

Microservice architecture

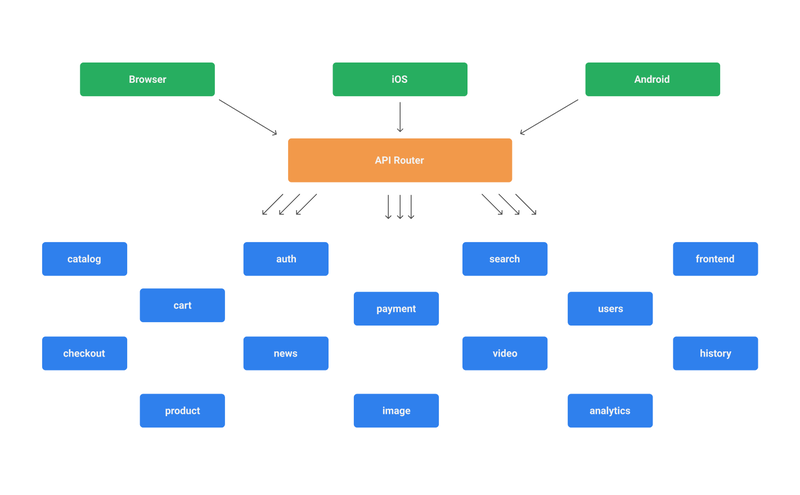

A microservice architecture is a distributed system based on microservices that each fulfill an assigned role in your application. A microservice architecture creates interactions between service layers. It also embodies the following principles that will be used in the further design and evolution of the system.

Now let’s consider what a microservice architecture might look like. The easiest way to demonstrate this is with a diagram:

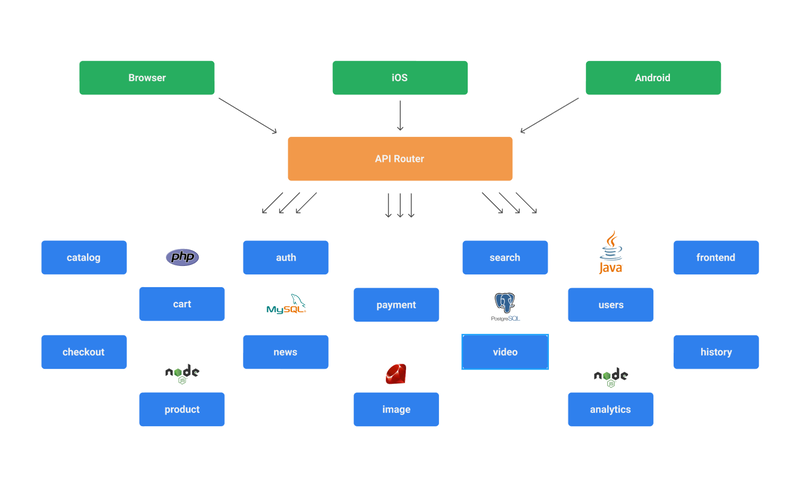

It’s usually the case that microservices are implemented in various programming languages and use various platforms. This is a very real scenario for this type of architecture, as there are often different development teams working on different services. There’s also a good chance of using different databases on each individual service layer.

As a result, we can consider the following scheme:

This is far from the worst implementation of a microservice architecture, but the most basic problems of this approach are already visible: namely, the problems of support and further technical growth.

It’s important to understand that a microservice architecture is implemented and used when there’s a really crucial need tied to the technical features of the project and the stages of its growth. Do not succumb to the trend and immediately decide you need to create a microservice architecture, as it entails great technical and financial obligations to ensure stable performance.

Therefore, if you as a customer or a programmer developing an application architecture don’t know if you really need a microservice architecture, you should first consider developing a monolithic application. When it comes to moving a certain part of your application to a separate service layer, you’ll need to think about it.

Of course, there are applications that immediately need to be split into separate layers for using and processing information. Such applications include those that work directly with IoT devices, which can transfer information to a server almost continuously that must be processed in real time with high bandwidth.

In this case, you can implement a backend for a mobile application, let’s say in PHP, Python, or Ruby. The server that processes incoming information from devices would be written in Node.js, which in turn would write the necessary information to the database and initiate the launch of tasks in the queue for further information processing.

The functionality of the queues themselves can also be put in a separate layer for load balancing, in which case you’ll get a fairly transparent and scalable service architecture that can be built based on containers and the Kubernetes platform.

Final thoughts

Now you understand the basics of Kubernetes and know when it’s appropriate to use it. Here are some key takeaways:

- Kubernetes is an open-source, portable system for managing and distributing containers.

- Containers are similar to virtual machines, but they have isolation properties for sharing the operating system between applications.

- Kubernetes deals with scaling and error handling in applications and offers deployment templates and much more.

If you want to implement this technology in your software or if you have any questions regarding this topic, contact Mobindustry for a free consultation.